Click here for a free recording of the event!

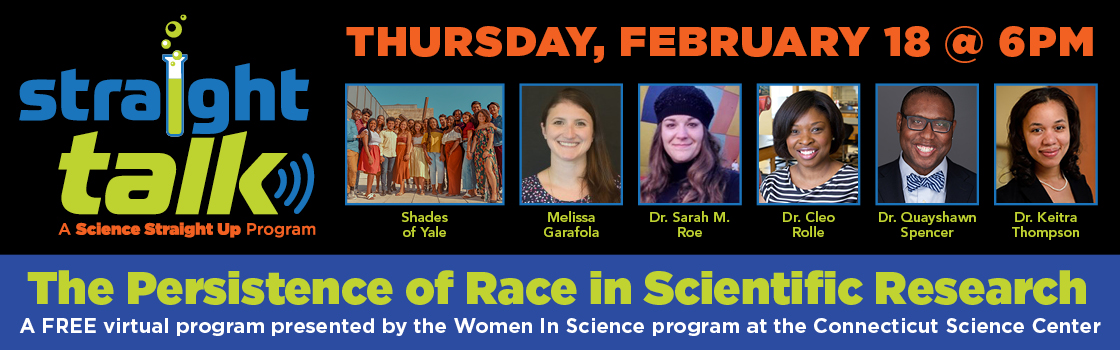

Straight Talk: The Persistence of Race in Scientific Research

Join us for another amazing, interactive discussion on some of today’s hottest topics lead by our esteemed panel of guests. This conversation between philosophers and scientists will not only interrogate some of the enduring ideologies of race in America but also some of the reasons behind its continued resonance within the scientific community, largely in the field of genetic research.

Guests Include:

The Black National Anthem will be performed by Shades of Yale.

Melissa Garafola, Connecticut Science Center

Genomics Educator

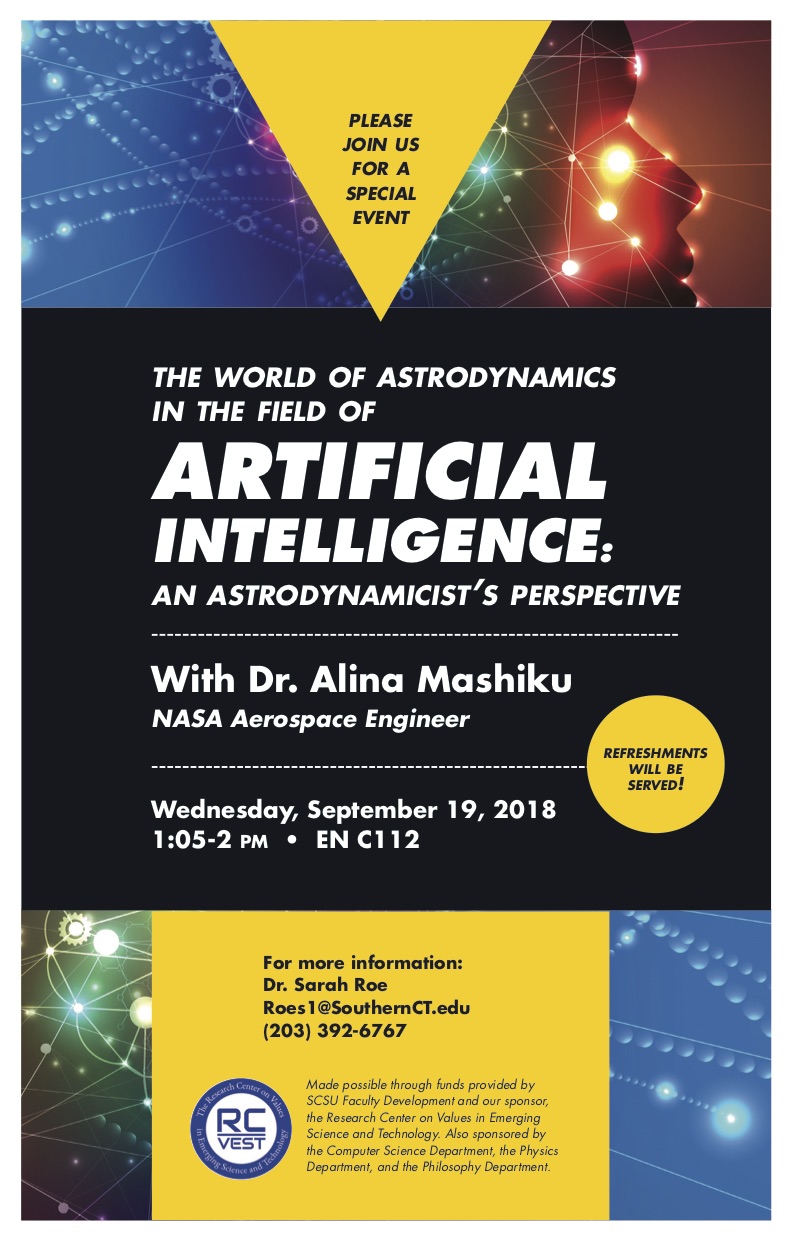

Sarah M. Roe, PhD, Southern Connecticut State University

Director of the Research Center on Values in Emerging Science and Technology

Cleo Rolle, PhD, Capital Community College

Assistant Professor, Biotechnology Program Coordinator

Quayshawn Spencer, PhD, University of Pennsylvania

Robert S. Blank Presidential Associate Professor of Philosophy

Keitra Thompson, DNP, MSN, FNP-BC, Yale School of Medicine

Postdoctoral Fellow, National Clinician Scholars Program/VA Advanced Fellowship Program